Subscribe to our

weekly update

Sign up to receive our latest news via a mobile-friendly weekly email

The holiday season is when digital storefronts make or break retail performance, and our data shows which retailers are truly ready. This report analyzes the NRF Top 50 Global Retailers using Catchpoint’s Global Agent Network of 3,000+ vantage points worldwide and our proprietary Digital Experience Score (see page 17 for methodology). It reveals how leading brands like Apple, Amazon, and IKEA actually perform when customers go online.

*Estimated revenue impact calculated using industry benchmarks for conversion uplift per second faster load time

The table below shows all evaluated retail websites ranked by their overall Digital Experience Score.

How to read the scores:

Curious how your brand compares?

Get a Free Retail Assessment with one of our Internet Performance Monitoring experts.

The following takeaways highlight key insights from our analysis of the NRF Top 50 Global Retailers homepages, from what set top performers apart to where others need to improve.

For actionable steps on how banks can improve, including concrete recommendations for tackling front-end optimization, global performance gaps, and experience-centric monitoring.

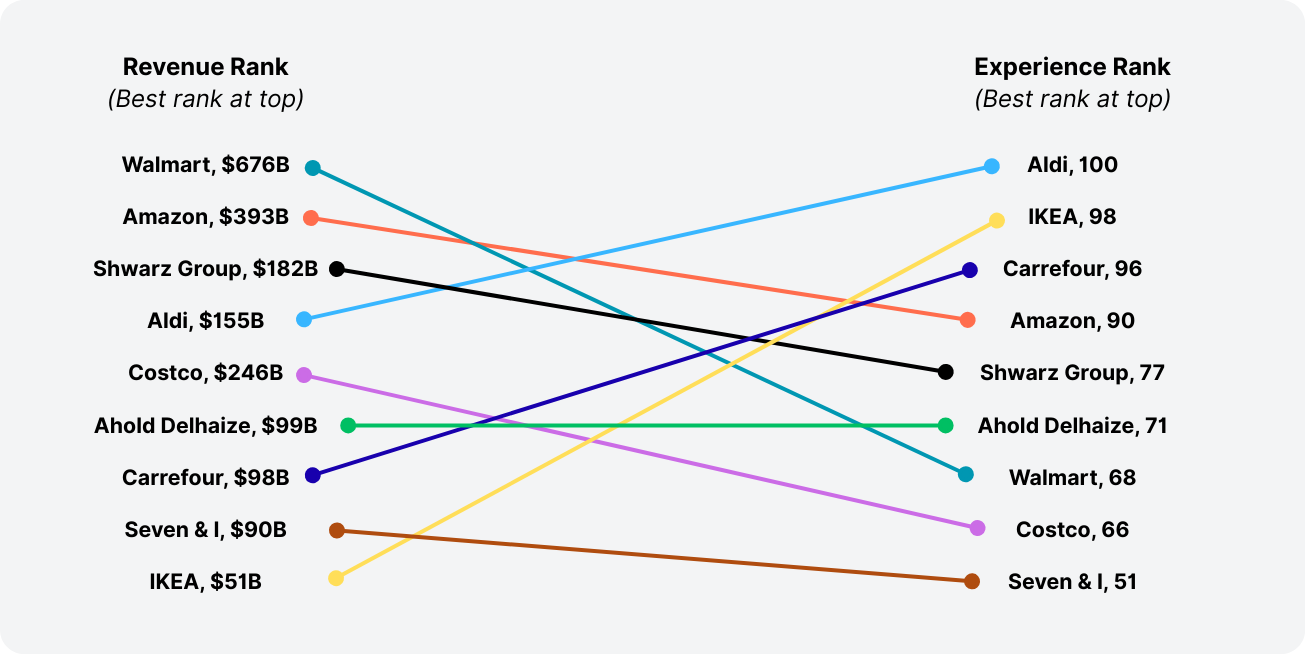

Some of the world’s largest retailers underperform on digital experience, while smaller players deliver industry-leading performance. By comparing company revenues from the NRF Top 50 Global Retailers list with our Experience Scores, we see that scale does not guarantee excellence.

Several smaller or mid-sized retailers go toe-to-toe with, and even outperform, the industry’s largest players

Meanwhile, some of the biggest names in retail lag in user experience rankings:

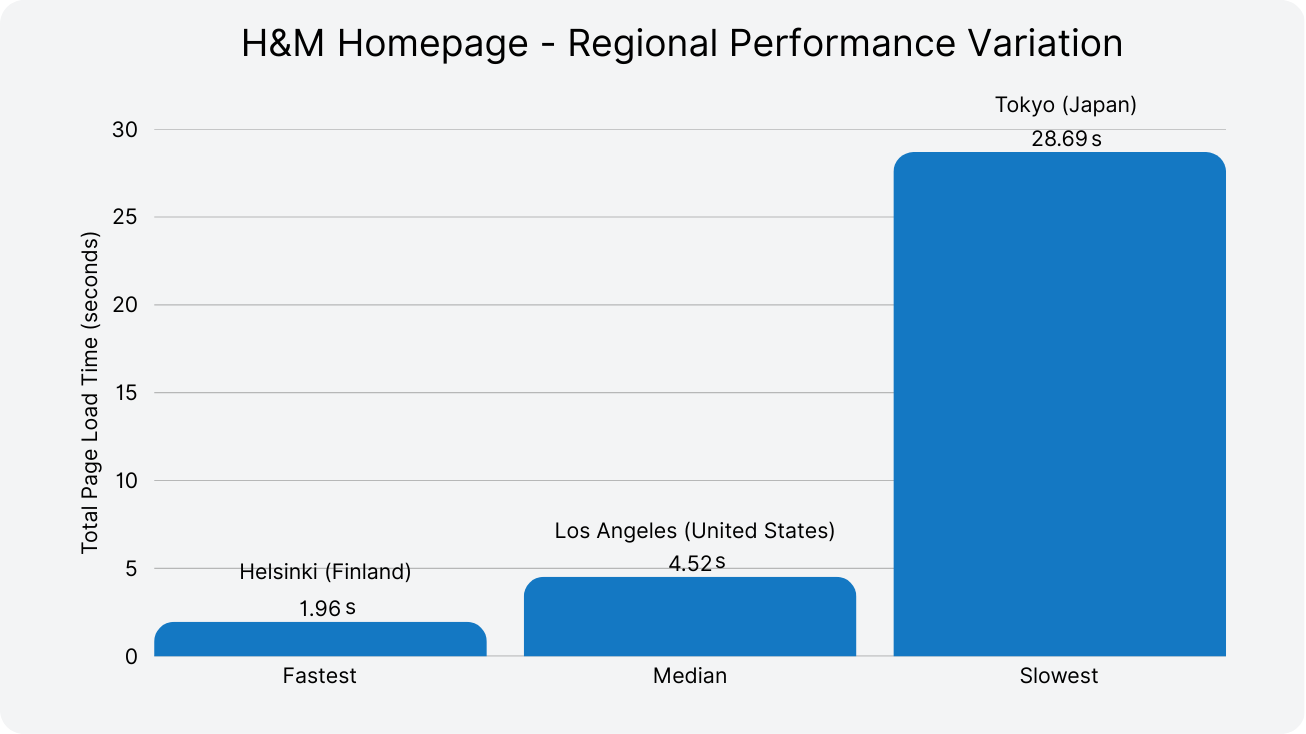

IIdentical retail websites deliver fundamentally different experiences depending on where customers access them and how those sites are monitored. Testing the same retailer across multiple locations, ISPs, and network types reveals performance variations that traditional monitoring approaches systematically miss.

For example, in one test:

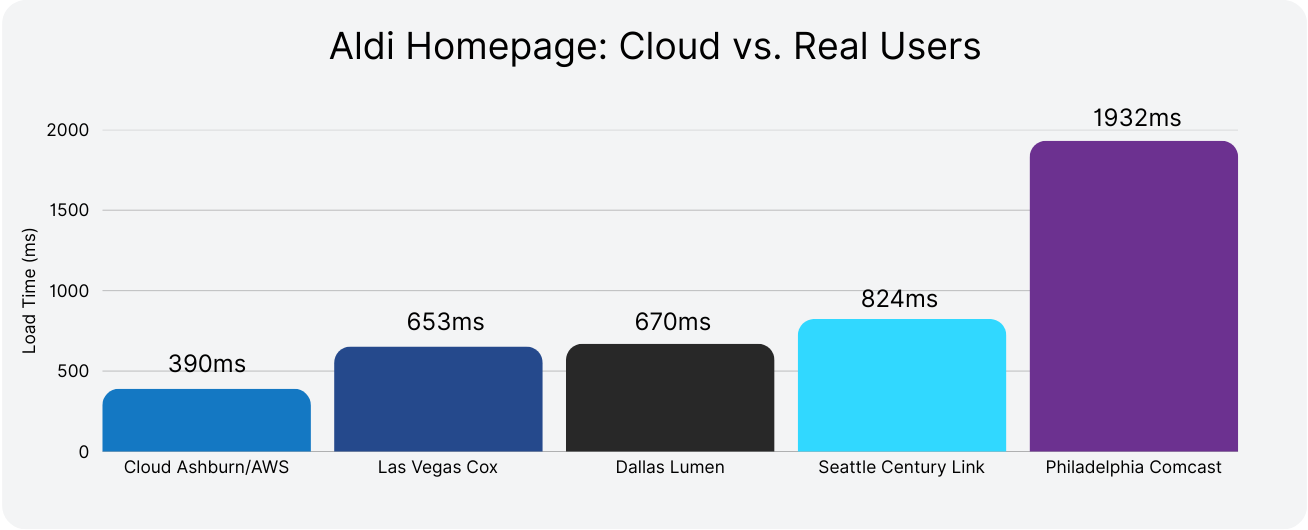

Aldi’s homepage shows a crystal-clear “Cloud vs. Real Users” story. From a U.S. cloud vantage point, it looks like the site loads in under half a second — but real customers wait 2-5× longer depending on their ISP or access type.

Measured at the same time across diverse vantage points, Aldi’s homepage shows just how inconsistent “the same site” can feel.

Philadelphia customers wait 1,932ms - nearly 2 full seconds longer than cloud metrics suggest.

A retailer seeing strong performance metrics from monitoring in the cloud could be losing substantial revenue in markets where last-mile performance degrades to 2+ seconds — without realizing it. These hidden slowdowns can significantly erode engagement and conversion, especially in mobile-first or emerging markets. Monitoring from the users perspective, across diverse geographies, ISPs, and network types, is the only way to reveal and address these disparities.

A 50-point performance spread shows big opportunities for improvement.

From Aldi’s perfect 100 to Alibaba’s score of 50, the digital performance of top global retailers varies dramatically—despite near-universal uptime.

While a few brands offer seamless, responsive, and stable sites across geographies, many others struggle to meet even baseline user expectations. The largest cluster of retailers fell between the mid-60s and low-80s, indicating broad parity with distinct leaders — and clear opportunities for improvement.

Despite 91% of retailers maintaining near-perfect availability, that didn’t guarantee strong user experience. In fact, more than a quarter of retailers scored under 66, indicating experience-level issues such as layout instability, slow rendering, or inconsistent performance across regions.

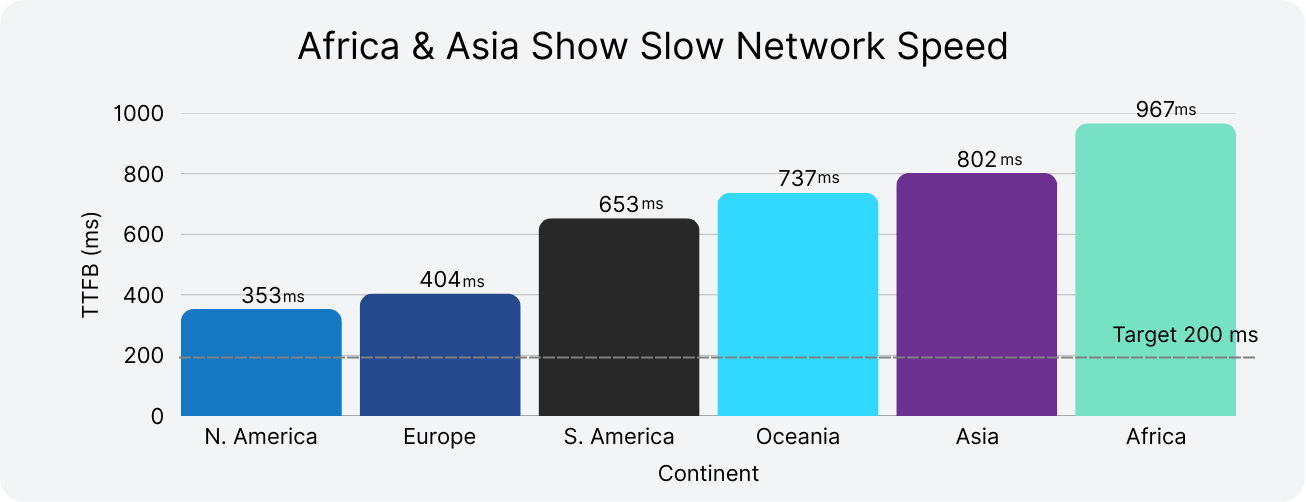

European retailers lead global digital performance with an average Experience Score of 82.2, followed by North America at 77.5. Asian retailers significantly underperform at 66.8 despite representing major global brands, while South American retailers score similarly at 66.0.

American retailers show highly variable performance, averaging 77.3. While leaders like Apple (96) and Amazon (90) excel, major brands including Walmart (68) and Target (54) underperform expectations given their scale and resources

The performance leaders demonstrate that exceptional digital experiences are achievable at global scale. Aldi leads the rankings with a perfect 100 score, followed closely by comparatively smaller Action (99), IKEA (98), and Euronics International (97). These top performers share common characteristics:

One of the most striking discoveries in this benchmark study reveals a fundamental flaw in solely focusing on internal metrics, or traditional monitoring.

Concerningly, 11 retailers delivered poor user experiences (scores below 70) despite excellent traditional metrics:

Notable Examples:

This represents the classic monitoring blind spot where dashboards show green while users suffer.

Traditional monitoring misses:

This data validates what many SREs and web performance teams suspect: perfect uptime and fast server response times don't guarantee users aren't struggling with slow, frustrating experiences.

Many retailers maintain near-perfect uptime but still deliver disappointing user experiences. In our benchmark, 16 retailers with ≥99.9% uptime scored below 70 on the Digital Experience Score — including major brands like Walmart, Target, and Tesco.

This reinforces a critical insight:

“Being online” no longer means your users are having a good experience.

Even among those with sub-benchmark availability, user experience impact varied widely:

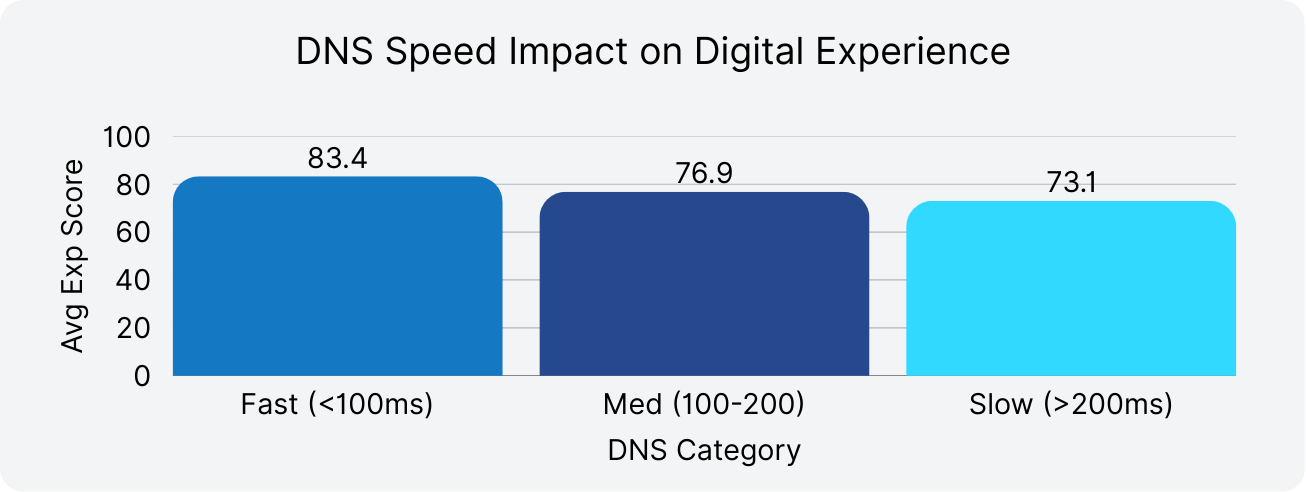

Bar chart showing how DNS response time impacts digital experience scores across retail companies.

DNS drags you down: Retailers with DNS under 100 ms score roughly 10 points higher on digital experience than those over 200 ms, showing that even small network delays can erode user satisfaction.

With the global retail digital transformation market expanding at 17.6% CAGR to reach $1.04 trillion by 2032, performance laggards' risk being systematically excluded from the digital economy’s growth trajectory. Market share isn’t just shifting; it’s being permanently redistributed based on digital experience quality.

The following recommendations outline where to focus resources to close experience gaps, improve operational resilience, and position your brand to win in an increasingly competitive digital retail landscape.

1. Deliver a fast, consistent experience everywhere

The top-ranked retailers, including Aldi, Action, and IKEA, delivered near-perfect uptime and fast load times across geographies. They show that excellent performance at global scale is achievable.

Even visual-heavy sites like IKEA demonstrated how speed and reliability can coexist through smart optimization.

2. Shift from availability-only monitoring to Experience Level Objectives (XLOs)

Most retailers have strong uptime — but that doesn’t mean users are having a good experience.

This outside-in approach is key to identifying blind spots that traditional monitoring misses.

3. Optimize for global reach, not just local performance

Retailers with strong domestic infrastructure often underperform internationally — especially in Asia, Africa, and South America.

Global shoppers expect the same seamless experience regardless of geography.

4. Prioritize Front-End Optimization

Even with perfect backend infrastructure, slow, unstable front-ends can destroy user satisfaction.

Top scorers like Carrefour and TJX performed well despite backend limitations, thanks to strong frontend strategies.

5. Treat TTFB as a leading indicator of experience

Time to First Byte (TTFB) reveals backend bottlenecks that cascade through the experience.

Metro AG and Carrefour scored highly despite elevated TTFB, but that’s the exception, not the rule.

6. Monitor APIs as mission-critical infrastructure

Retail sites rely on APIs for cart logic, personalization, and inventory. Poor-performing APIs silently degrade UX.

API failures often show up as slow UI — not as outright downtime.

7. Benchmark and monitor CDNs for real-world delivery

Not all CDNs perform equally across ISPs and geographies. CDN misalignment creates invisible friction.

Many low-scoring retailers showed elevated load times in Asia and South America despite using a CDN.

8. Benchmark continuously and learn from leaders

Performance is never static — especially in retail. Peak events like Black Friday test real-world resilience.

Small optimizations can elevate mid-tier performers into the top quartile.

9. Monitor from where users actually are to close regional performance gaps

Measuring performance only from cloud locations can miss major slowdowns that customers experience in specific regions or on certain ISPs.

Regional latency gaps are solvable, but only if teams can see them.

10. Make performance a cultural priority

The retailers delivering the best experiences aren’t just using better tools — they’ve made digital performance a strategic priority.

In modern retail, performance is product — and it’s the first impression users remember.

This benchmark evaluated companies from the NRF Top 50 Global Retailers 2025 list, ensuring unbiased representation of the world’s largest and most influential retail brands.

Timeframe

All data was collected between July 14 and August 1, 2025, providing a consistent 3-week snapshot of real-world performance across all monitored sites.

Monitored pages

We tested the public homepages of each retailer — the first touchpoint for most shoppers. This allowed for standardized comparisons across brands, capturing performance as experienced by a typical visitor.

Testing locations

Tests were conducted from 123 global monitoring locations across six continents:

This approach enabled us to capture both global averages and regional disparities in performance.

Ranking methodology

Retailers in this report are ranked using the Catchpoint Digital Experience Score — a holistic, user-centric measurement designed to reflect what customers actually experience when interacting with a website.

Built to reflect the full picture of system health, the Digital Experience Score combines endpoint, network, and application insights into a single, field-proven number between 0 and 100.

This metric cuts through complexity to provide a clear, reliable signal of how your customers (or employees) are experiencing your services — and where performance might be breaking down.

How the score is calculated

The score aggregates three key dimensions:

Together, these scores create a comprehensive and actionable view of user experience quality — one that traditional uptime and infrastructure metrics alone can’t deliver.

Read our guide for a deeper dive into the Digital Experience Score and how it’s calculated.

Metrics tested

In addition to the Catchpoint Digital Experience Score, we captured performance across eight core technical metrics.

Curious how your brand compares? Get a Free Retail Assessment with one of our IPM experts, or learn more about IPM for Retail.

Explicit Congestion Notification (ECN) is a longstanding mechanism in place on the IP stack to allow the network help endpoints "foresee" congestion between them. The concept is straightforward… If a close-to-be-congested piece of network equipment, such as a middle router, could tell its destination, "Hey, I'm almost congested! Can you two guys slow down your data transmission? Otherwise, I’m worried I will start to lose packets...", then the two endpoints can react in time to avoid the packet loss, paying only the price of a minor slow down.

ECN bleaching occurs when a network device at any point between the source and the endpoint clears or “bleaches” the ECN flags. Since you must arrive at your content via a transit provider or peering, it’s important to know if bleaching is occurring and to remove any instances.

With Catchpoint’s Pietrasanta Traceroute, we can send probes with IP-ECN values different from zero to check hop by hop what the IP-ECN value of the probe was when it expired. We may be able to tell you, for instance, that a domain is capable of supporting ECN, but an ISP in between the client and server is bleaching the ECN signal.

ECN is an essential requirement for L4S since L4S uses an ECN mechanism to provide early warning of congestion at the bottleneck link by marking a Congestion Experienced (CE) codepoint in the IP header of packets. After receipt of the packets, the receiver echoes the congestion information to the sender via acknowledgement (ACK) packets of the transport protocol. The sender can use the congestion feedback provided by the ECN mechanism to reduce its sending rate and avoid delay at the detected bottleneck.

ECN and L4S need to be supported by the client and server but also by every device within the network path. It only takes one instance of bleaching to remove the benefit of ECN since if any network device between the source and endpoint clears the ECN bits, the sender and receiver won’t find out about the impending congestion. Our measurements examine how often ECN bleaching occurs and where in the network it happens.

ECN has been around for a while but with the increase in data and the requirement for high user experience particularly for streaming data, ECN is vital for L4S to succeed, and major investments are being made by large technology companies worldwide.

L4S aims at reducing packet loss - hence latency caused by retransmissions - and at providing as responsive a set of services as possible. In addition to that, we have seen significant momentum from major companies lately - which always helps to push a new protocol to be deployed.

If ECN bleaching is found, this means that any methodology built on top of ECN to detect congestion will not work.

Thus, you are not able to rely on the network to achieve what you want to achieve, i.e., avoid congestion before it occurs – since potential congestion is marked with Congestion Experienced (CE = 3) bit when detected, and bleaching would wipe out that information.

The causes behind ECN bleaching are multiple and hard to identify, from network equipment bugs to debatable traffic engineering choices and packet manipulations to human error.

For example, bleaching could occur from mistakes such as overwriting the whole ToS field when dealing with DSCP instead of changing only DSCP (remember that DSCP and ECN together compose the ToS field in the IP header).

Nowadays, network operators have a good number of tools to debug ECN bleaching from their end (such as those listed here) – including Catchpoint’s Pietrasanta Traceroute. The large-scale measurement campaign presented here is an example of a worldwide campaign to validate ECN readiness. Individual network operators can run similar measurement campaigns across networks that are important to them (for example, customer or peering networks).

The findings presented here are based on running tests using Catchpoint’s enhanced traceroute, Pietrasanta Traceroute, through the Catchpoint IPM portal to collect data from over 500 nodes located in more than 80 countries all over the world. By running traceroutes on Catchpoint’s global node network, we are able to determine which ISPs, countries and/or specific cities are having issues when passing ECN marked traffic. The results demonstrate the view of ECN bleaching globally from Catchpoint’s unique, partial perspective. To our knowledge, this is one of the first measurement campaigns of its kind.

Beyond the scope of this campaign, Pietrasanta Traceroute can also be used to determine if there is incipient congestion and/or any other kind of alteration and the level of support for more accurate ECN feedback, including if the destination transport layer (either TCP or QUIC) supports more accurate ECN feedback.